A Maybe Kinda Secure Way to Evaluate Untrusted Javascript in a Web Browser*

*Disclaimer: the approach outlined here very likely has security risks

I’m not a security expert and I don’t guarantee that the approach laid out in this article is secure. In fact, part of why I’m writing this article is because I’d love for someone to point out the flaws with my approach, which very likely exist.

That said, I’m also writing this article because I couldn’t find great online resources about sandboxing Javascript, and felt that I should publish my learnings.

Background, or why I’m executing untrusted code in the first place

I and a collaborator are developing a successor to Methodable. The new tool will allow people to produce beautiful interactive guides.

One key feature of our tool is the ability to create guides that run computations in the background and incrementally render custom content for each user. We want to build a tool where any author can create magical interactivity without writing code, but we also want to allow authors to write executable snippets of Javascript in case they want additional customizability.

An example: board game instructions

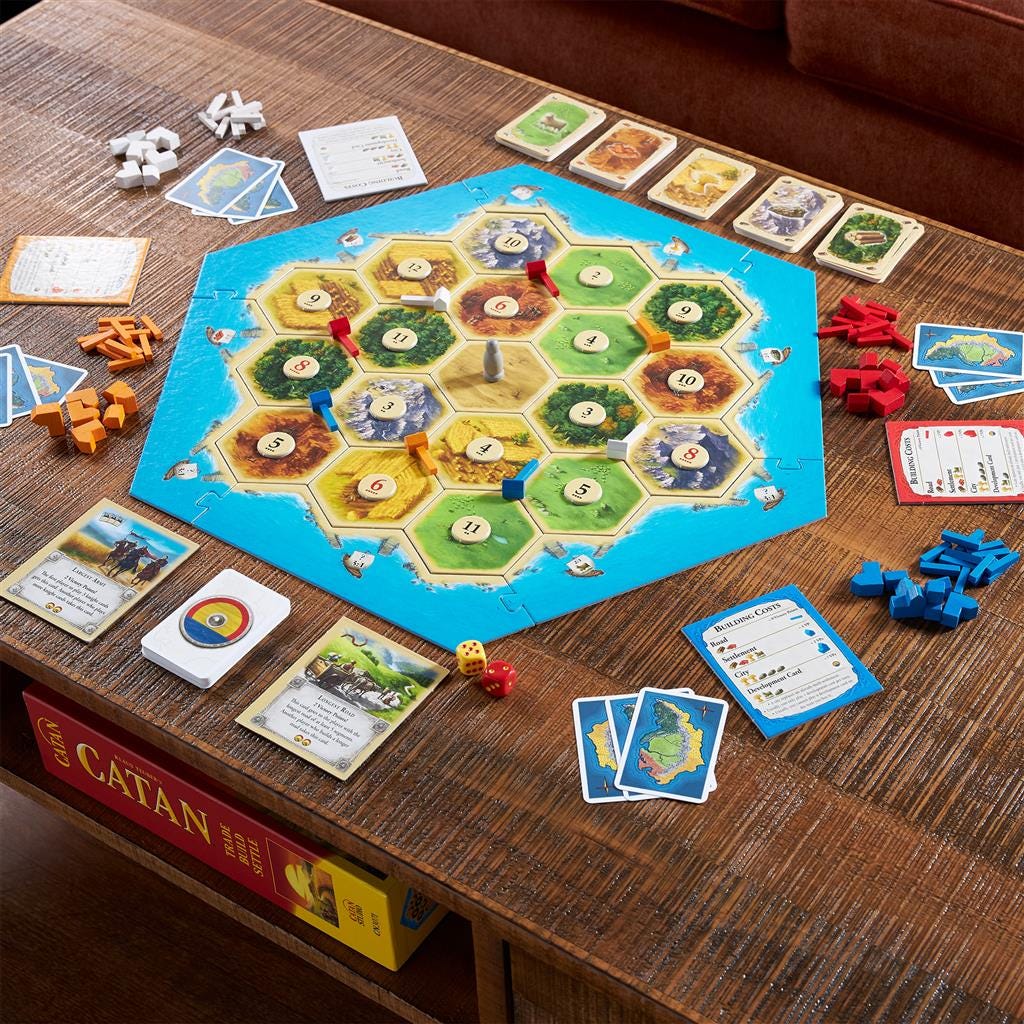

Suppose someone wants to make an interactive guide to the board game Catan.

First the author wants to ask how many people are playing, with six numbered buttons to click, and to assign the value of the clicked button to a variable called players. Here’s how that would look in the authoring UI:

The author also wants the guide to automatically evaluate whether the players need an expansion pack, so they add a code block:

Finally the author adds a conditional branch so that if the players need an expansion pack, a special section of the guide appears:

The user-submitted code creates an interactive experience in the published guide

How would the published guide look? If a group of five Catan players were to click the button “5” then they would see the following:

Hopefully this simple example demonstrates the utility of allowing authors to write code that runs in the background while a guide renders.

So how do we actually go about evaluating Javascript within our web app? First off, some technical goals:

Goals for the code evaluation sandbox

API goals: simulate a persistent execution context

In general, we want authors who write code to feel like they have global execution context that they can tap into at any point in a document/guide’s lifecycle. To put that in more concrete terms, we have the following three goals for the API of our code execution environment:

Goal 1: Allow declarations to a secure global context

We want to allow users to add variables to the context using normal Javascript declarations, like so:

let myText = “hello world”

and like:

const {h,w} = {h: “hello”, w: “world”}

Goal 2: Be able to reference variables from the secure global context in evaluations

We want to allow the user to reference variables from that context in later code snippets, like so:

let helloWorld = h + “ “ + w

Goal 3: Allow passing values out of the secure global context

We want to evaluate expressions and access the resulting values from the outer context (from the browser window):

helloWorld.length > 5

Security goals: untrusted code has no access to client secrets

I intend for user-submitted Javascript code to be unable to read from anything other than its fenced-off execution context and unable to write to anything other than its fenced off execution context.

I am tentatively okay with allowing the user-submitted code to have side effects and make requests as long as it’s sandboxed off from any end-user data or browser storage. I am not 100% convinced that this is safe; again, please let me know if you have criticisms of my approach.

The Approach

You can see all of the code at this link (Note that sometimes we use the word “flogram” as shorthand for “user-submitted code”).

Read on for a breakdown of the approach, which uses Web Workers, static analysis, and the Function constructor.

1. Evaluate user-submitted code in a Web Worker for sandboxed code execution

The advice about evaluating arbitrary Javascript that I came across most often was “don’t do it”. The second piece of advice I came across was to either “evaluate your code in a Web Worker” or “evaluate the code in a secure execution environment on your server.” We wanted an approach that works with our so-far client-rendered app, so we went with Web Workers.

What’s a Web Worker and why are they more secure?

According to the mdn web docs:

Web Workers are a simple means for web content to run scripts in background threads.

Web workers run in another global context that is different from the current

window. Thus, using thewindowshortcut to get the current global scope (instead ofself) within aWorkerwill return an error.

So, code evaluated within a worker cannot access the client’s Window. This removes the vulnerability for some XSS attacks such as session highjacking. Still, be aware that Web Workers were not designed for sandboxed code execution, that’s only an incidental ability.

Note: our approach uses a Web Worker and not a Service Worker. Service workers have more permissions and may pose more of a security risk.

2. Use the Function constructor for scoped evaluation

MDN recommends we use the Function constructor instead of eval to create a blank canvas for execution. Here’s how we apply the Function constructor when we’re trying to evaluate code to return a value:

In this code snippet, we take in a string of user-submitted code (toEval) to evaluate and a context to evaluate it against, then create a code string that declares all of the context variables and finishes with a return statement that returns the evaluated user-submitted code. That code string is passed to the Function constructor, where it’s executed in a scoped function, and the result is returned.

How do we generate all of the context declarations/assignments for the code string? like so:

3. Use static analysis to determine what new variables the user is declaring

In order to allow the user to declare new variables, we need to know what new variables the user declares to save those new variables back into the context. It turns out that this is very difficult and Javascript basically doesn’t allow you to do that, at least not inside of functions:

In Javascript, you can’t access the function scope as an object (not since the with statement was deprecated). You can access things within the function scope but you cannot iterate over the keys and values in the scope, meaning you cannot tell what new variables have been declared in a function scope.

Here are three possible solutions to this conundrum:

Possible solution 1: evaluate everything in the Web Worker’s global scope

Unlike function scopes, Javascript does actually allow you to iterate over the contents of the global scope, so we could evaluate user code in the global scope and then scrape the variables back out, but this gets very messy because the Web Worker global scope is full of all kinds of variables already. For example, if a user were to create a variable called name that would collide with the self.name variable that already exists in the Web Worker.

name is a very popular variable name, so this is not a good solution.

Possible solution 2: just give users a “context” object that they can add properties to instead of making new declarations

We didn’t do this. Maybe it’s still a good idea. But for the time being, we’re dead set on the code experience feeling like just coding in a global context and declaring variables.

Possible solution 3 (winner): use static analysis to find declarations

We decided to use the esprima library to parse the user-submitted JS and identify newly declared variables, using the function getDeclaredIdentifiers below:

Note: in the code above we catch “normal” declarations like

let a = 3, and declarations that destructure from an object (using the recursive sub-functiongetIdentifiersRecursive), but not array-based destructuring and possibly not whatever unbeknownst-to-me variable declaration patterns are out there. For now, users will make do with the limited selection.

Now that we know what new variables are declared in the user’s code, we can generate code to place at the end of our code string in cases where we are declaring new variables:

So, putting that all together we can now take a context and some user-submitted code, evaluate the code against the context, and return the new context, including newly declared variables:

4. Wrap it all up in an interface

Web Workers are messy and I try to abstract the handling of the workers away from the rest of my code as much as possible. In the end, I ended up with this interface:

These two simple function interfaces allow a guide experience to gradually build up a context by executing user-submitted code, and to evaluate user-submitted boolean conditions against that context as well. All user-submitted code execution is done in Web Workers in a separate file.

The screenshots in this article are not the complete code, once again here’s all of the code.

Final thoughts and security concerns

Everything that touches values generated by user-supplied code is a potential vulnerability

Even if user-submitted code cannot be used maliciously within our Web-Worker sandbox, we still have to be very careful with how we handle the resulting values outside of our sandbox. For example, if we allow users to display variable values in the guide, that means that at the very least the toString function is being called on values that were generated by untrusted code, which would be a vulnerability. This calls for strict validation before doing anything based on the results of user-submitted code.

Even then, I am not sure if it’s safe to store untrusted-code-generated context in the main thread, even if it’s just being stored and passed back to the Web Worker: perhaps there’s some way that the stored context could contain code that asynchronously gains access the main browser thread. Again, data validation could prevent this, and it may be necessary to limit the kinds of values that are allowed to live in the persistent context (more research needed!).

There are other reasonable approaches for sandboxed code execution

Many sandboxes like Replit evaluate code server-side.

Another approach is to follow the lead of Observable which has an entirely custom quasi-Javascript language and parser. But Observable still doesn’t recommend users to fork untrusted notebooks (and I assume Replit would not recommend people fork untrusted REPLs). There’s always inherent unpredictability when allowing people to run each other’s code.

Conclusion

I’ve presented a hacky but functional approach to giving users an in-browser code-execution sandbox. Let me know if you see any glaring security issues with my setup. And get psyched to start playing around with our magical document authoring experience soon :))

til next time,

Daniel